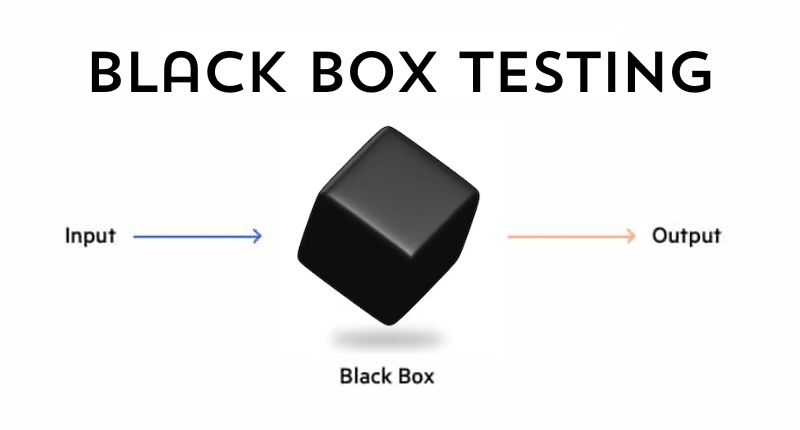

Understanding Black Box Testing

Black box testing, a vital software testing method, operates without knowledge of an application’s internal structure. It encompasses a plethora of testing techniques aimed at uncovering vulnerabilities and weaknesses in the product. This approach simulates a real-world attacker’s strategy by probing for exploitable software gaps. The methodology primarily scrutinizes the input and output of software applications, relying solely on software requirements and specifications. Often known as behavioral testing, it forms a cornerstone in ensuring software functionality and integrity.

Advantages of Black Box Testing

Black box testing offers numerous benefits:

Inclusive Tester Participation: It enables individuals without programming or technical expertise to effectively perform testing tasks.

User-Centric Approach: It evaluates software functionality from the end-user’s perspective, ensuring alignment with user expectations.

Error Identification: It has the ability to detect errors that aren’t visible in the code, encompassing usability, performance, security, and compatibility issues.

Versatile Testing Environment: It facilitates software testing across diverse environments and platforms, ensuring compatibility and adaptability.

Challenges in Black Box Testing

Nevertheless, black box testing presents several challenges:

Resource-Intensive Nature: Designing and executing test cases can be time-consuming and financially demanding.

Hidden Errors: The methodology might overlook errors concealed within the code, such as logic, syntax, or algorithmic errors.

Redundancy in Testing: It may involve the creation of redundant test cases, covering the same functionality multiple times.

Error Cause Identification Complexity: Identifying the root cause of errors might pose difficulties due to the testing method’s nature.

Techniques in Black Box Testing

A range of techniques aids in black box testing:

Equivalence Class Testing: Minimizes test cases while maintaining coverage by categorizing the input domain into classes of equivalent values.

Boundary Value Testing: Focuses on boundary values to determine the system’s acceptability within specific ranges.

Decision Table Testing: Utilizes a matrix format to test complex business rules and scenarios effectively.

State Transition Testing: Assesses system behavior under different states and transitions, beneficial for dynamic systems.

All-Pair Testing: Tests all possible combinations of two parameters, ideal for systems with multiple parameters and interactions.

Cause-Effect Graph Testing: Illustrates logical relationships between causes and effects for systems with multiple inputs and outputs.

Equivalence Partitioning Testing: Divides the input domain into partitions of equivalent values for efficient testing.

Error Guessing Testing: Relies on tester intuition and experience to identify potential system errors.

Use Case Testing: Evaluates systems based on user actions and expectations, ensuring usability and functionality.

User Story Testing: Tests systems based on user needs and goals, aligning software functionalities with user expectations.

Understanding AI System Principles

AI system principles serve as guidelines ensuring ethical and beneficial AI development and use. They aim to ensure AI systems are ethical, trustworthy, and advantageous for humanity and society. Common AI principles include:

Social Benefit: Prioritizing AI systems contributing to human well-being, respecting cultural and legal norms.

Fairness: Avoiding biases, ensuring equal treatment for all individuals, and maintaining transparency and accountability.

Safety: Ensuring secure, robust AI systems that avoid causing harm.

Privacy: Protecting user data and complying with relevant data protection laws.

Inclusiveness: Ensuring AI systems are accessible and inclusive for all individuals, regardless of background.

Excellence: Upholding high scientific standards and fostering innovation in AI development.

Conclusion

These AI system principles provide a comprehensive framework for evaluating the ethical and societal implications of AI. They guide developers and users in making responsible choices to align AI systems with human values and interests, ensuring their advancement benefits humanity and society.